On the 14th of January I gave a talk at a local technology meetup in Linz called Technologieplauscherl about the Universal Windows Platform. This post is the written form of the talk, which was intended for people who do not know UWP, but have a strong background in software development (and it's even better if you have some knowledge about C#/XAML based development).

And here is the presentation.

Introduction

The goal of the talk and this post is to give an overview about UWP. If you are a developer (or something related) working on a product which is not shipped to Windows at the moment, but you want (or your boss wants) to ship a Windows Version then UWP is today definitely a valid option for your scenario. My goal is that after the talk and this post you are able to decide whether UWP is a fit for your scenario and you also should be able to jump in and start experimenting with it.

On the other hand I think this "One code for every device" is a very interesting concept. If you are for example an iOS developer imagine that your app (the same app, the single package you create) is able to run on Mac, Apple Watch, iOS, iPad, iWhatever. Maybe you don't like this concept, but seeing a framework where this is possible is definitely beneficial.

What is UWP?

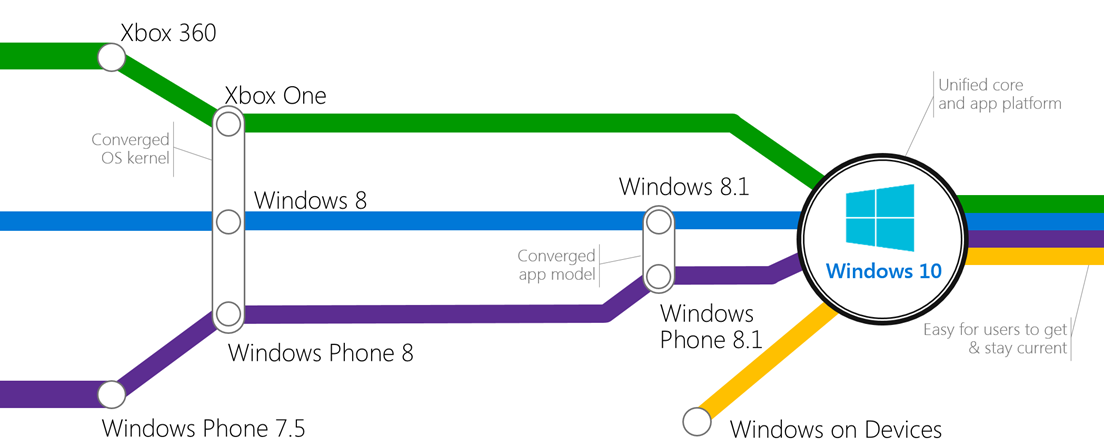

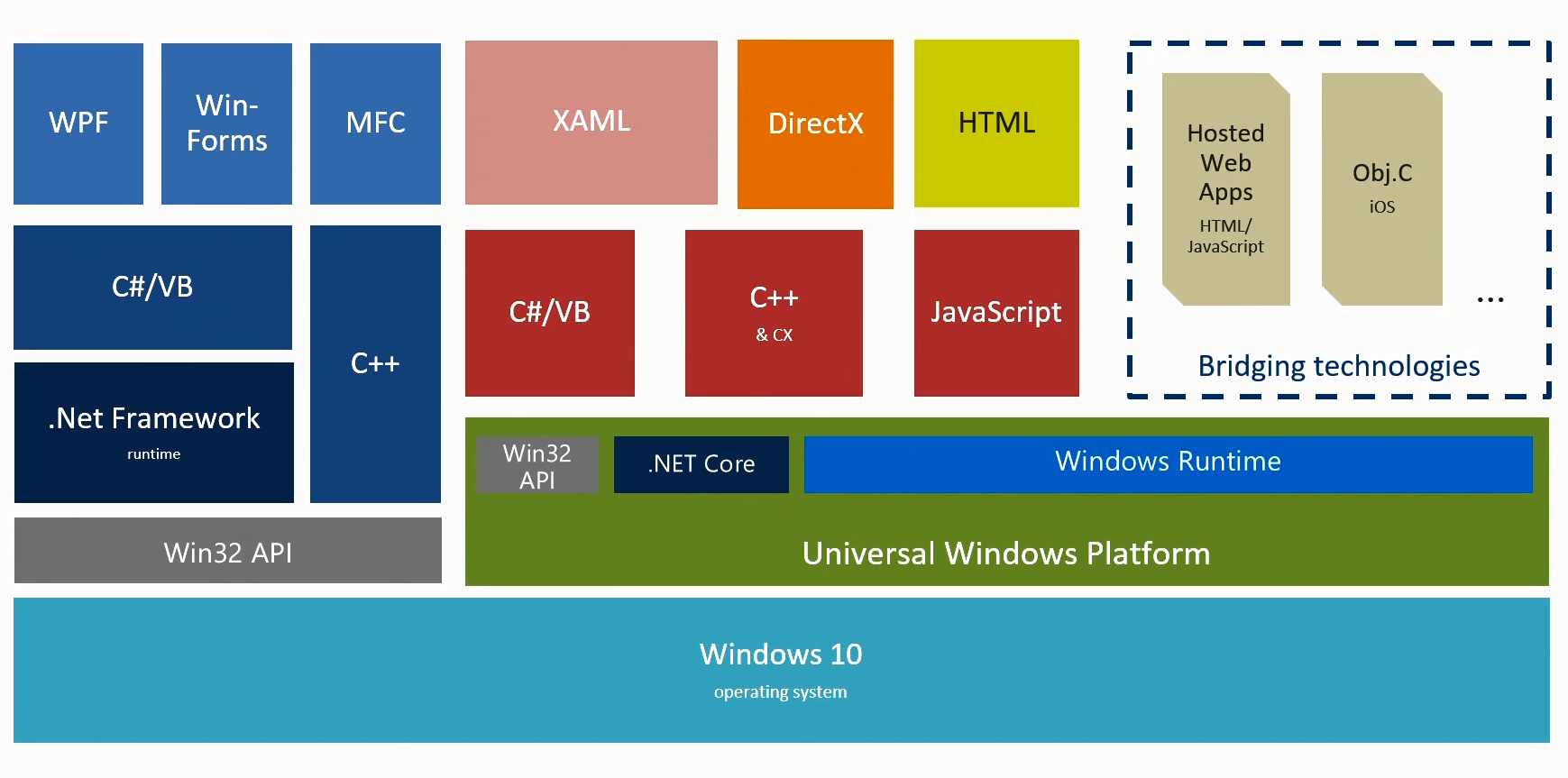

To understand the motivation behind UWP it makes sense to go back in time a little bit: In the past (let's say 10 years ago) Microsoft already had many-many platforms for which you were able to build applications: think about Windows Phone (or Windows Mobile if you started earlier), Windows Desktop with the .NET framework and WinForms, then later WPF, Xbox, and so on. The programming model of these platforms were never radically different: C# was already a long time ago the language which you were able to use everywhere and later when Windows Phone came along people basically wrote C#/XAML apps both for Windows Phone and in WPF and later Windows Store Apps were also implemented with C# + XAML. Of course there were many other options like C++, HTML, JavaScript, but they all shared the same underlying framework. With Windows 8 we came to a point where the source code of a Windows Phone and a Windows Store app in many cases looked very similar. In fact, in Windows 8.1 and Windows Phone 8.1 the app model was the same and you were able to write so called Universal Apps where you shared a huge amount of code between Phone and Desktop.

Source: Microsoft

This was not an accident: with Windows 8 Microsoft introduced the Windows Runtime which is basically the successor of Win32: it is a low lever framework designed for devices which not only have mouse and keyboard, but other input devices like touch screen and voice command. But the Windows Runtime is not only a simple low level library. It also has a so called language projection layer: Although it is written in unmanaged C++ the language projection layer projects these C++ classes into other languages like C# and JavaScript. The benefit of this compared to the old model (.NET on top of Win32, where you compiled to Intermediate Language) is that in this environment developers are also able to create unmanaged C++ applications based on the Windows Runtime (and they have the same libraries as C# developers with the same UI layer!). And even better: Web developers with JavaScript and HTML knowledge are also able to use the same platform! And these two things (unmanaged C++ and JavaScript for the whole application code) was never possible with .NET.

And the logical question is: why do we need this Windows Runtime? Isn't Win32 good enough? Well, Win32 is older than me and it was design when touch and IoT devices were not on the horizon (I'm sure the original designers of Win32 never thought that it should run on a 4.5" Phone). One fundamental difference is for example that many Windows Runtime APIs are out of the box asynchronous. The framework is prepared for things like execution CPU heavy operations on background tasks to protect the UI thread so the app remains responsive.

Here is a code snippet which we write in UWP. Think about how would you write this in Win32? (I point out that you can start this from the UI thread and your UI will be responsive although this operations takes time...)

public async Task LongRunningMethod()

{

var file = await

Windows.Storage.ApplicationData.Current.LocalFolder.

CreateFileAsync("VeryBigFile.dat",

Windows.Storage.CreationCollisionOption.ReplaceExisting);

using (var stream = await file.OpenStreamForWriteAsync())

{

using (var streamWriter = new StreamWriter(stream))

{

var lotsOfData = GetLotsOfData();

await streamWriter.WriteAsync(lotsOfData);

}

}

}

The Universal Windows Platform is the next iteration of the Windows Runtime: it is the heart of the Universal Windows Platform, but in Windows 10 it's massively extended.

Source: Microsoft/Andy Wigley

With this for Windows 10 today we have one developer platform for all the devices which run Windows 10: the same for Mobile, Desktop, and other new things like IoT devices, HoloLens, the Surface Hub and even Xbox: all of them share the same runtime which was originally introduced in Windows 8 and now extended in Windows 10.

Adaptive UI

In the first section we understood the motivation behind UWP and now we know what that is. The first obvious question is this: Ok, but how to design a UI which works for a 4" Phone and a 55" Surface hub?

Regarding this you have many options. You can design dedicated UI for different device families (this is something what Apple did in pre iOS 9 versions with iPhone/iPad apps), but the real power is in the new concept called Adaptive UI: There are XAML (or for JavaScript/HTML devs HTML) controls which make it possible to declaratively design a single UI for multiple screen sizes.

First let's quickly see how we can design multiple UIs for different devices. But before we look into the code I would like to point out that in most cases you can completely avoid this! The recommended way to go is almost always the Adaptive UI, which I will introduce later.

So, first of all you can select a UI manually from your code. This was possible in pre UWP times and this is not different here. If you have a good reason to do something like this, then you can do it:

//Get the diagonal size of the integrated display

var dsc = new DisplaySizeHelper.DisplaySizeClass();

double _actualSizeInInches = dsc.GetDisplaySizeInInches();

//If the diagonal size is <= 7" use the OneHanded optimized view

if ( _actualSizeInInches >0 && _actualSizeInInches <= ONEHANDEDSIZE)

{

rootFrame.Navigate(typeof(MainPage_OneHanded), e.Arguments);

}

else

{

rootFrame.Navigate(typeof(MainPage), e.Arguments);

}

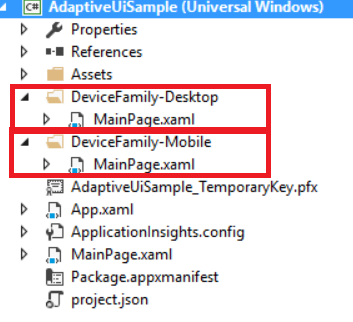

Another approach is to design specific UI for specific device families. This is supported by the framework: everything which you place into the DeviceFamily-Mobile folder will be loaded only if the code is running on a mobile device. The same applies for other device families.

In this case you add XAML Views (without code behind) to the device family specific folder. The code behind from the main view (in this case MainPage.Xaml.cs) will be shared across the different pages. If you create a XAML page with code behind it will be ignored. Another option to achieve the same is that you embed the device family name into the filename instead of creating a folder for that. In this sample if you add a MainPage.DeviceFamilyMobile.xaml and a MainPage.DeviceFamilyDesktop.xaml then you have basically the same thing without creating two extra folders.

Where I see the power of this is when you in general have one UI (built with Adaptive UI techniques, which will be introduced in the next paragraph), but there is one device with a radically different UI. E.g. one UI for everything, except Xbox. In that case you have to store your UI for Xbox in the folder DeveiceFamily-Xbox (or embed the device family into the filename as described before) and leave your default UI in the root of the View folder.

But now we come to the real interesting stuff: Microsoft introduced a concept for Adaptive UI.

The heart of this are the so called Visual State Triggers and Visual State Setters. The two of them form a so called visual state.

There are two predefined triggers in the framework: MinWindowWidth and MinWindowHeight.

Here is how you define a Trigger:

<VisualState.StateTriggers>

<AdaptiveTrigger MinWindowWidth="0"/>

</VisualState.StateTriggers>

The other part of the state are the so called Setters:

<VisualState.Setters>

<Setter Target="HelloWorldTextBox.FontSize" Value="12" />

</VisualState.Setters>

As you see these are normal properties which are applied to the targeted UI elements. And when are these setters applied? Yes, when the trigger fires (meaning returns true).

Here are three states defined as a very simple sample.

<VisualStateManager.VisualStateGroups>

<VisualStateGroup>

<VisualState x:Name="Small">

<VisualState.Setters>

<Setter Target="HelloWorldTextBox.FontSize" Value="12" />

</VisualState.Setters>

<VisualState.StateTriggers>

<AdaptiveTrigger MinWindowWidth="0"/>

</VisualState.StateTriggers>

</VisualState>

<VisualState x:Name="Medium">

<VisualState.Setters>

<Setter Target="HelloWorldTextBox.FontSize" Value="32" />

</VisualState.Setters>

<VisualState.StateTriggers>

<AdaptiveTrigger MinWindowWidth="600"/>

</VisualState.StateTriggers>

</VisualState>

<VisualState x:Name="Large">

<VisualState.Setters>

<Setter Target="HelloWorldTextBox.FontSize" Value="62" />

</VisualState.Setters>

<VisualState.StateTriggers>

<AdaptiveTrigger MinWindowWidth="800"/>

</VisualState.StateTriggers>

</VisualState>

</VisualStateGroup>

</VisualStateManager.VisualStateGroups>

Another new UI Control which comes to this is the RelativePanel. You can also combine this with Visual States and completely reorder items within the RelativePanel in different states. For a detailed description of the RelativePanel go here.

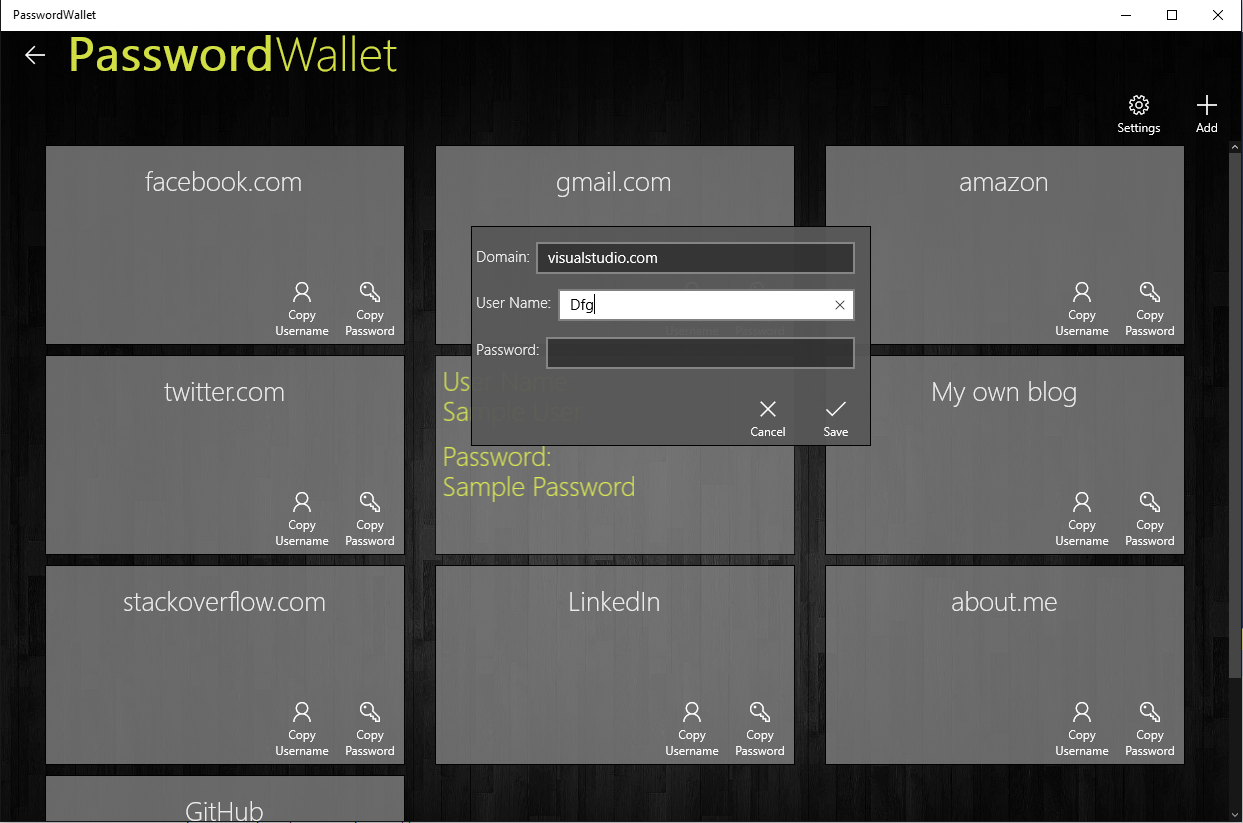

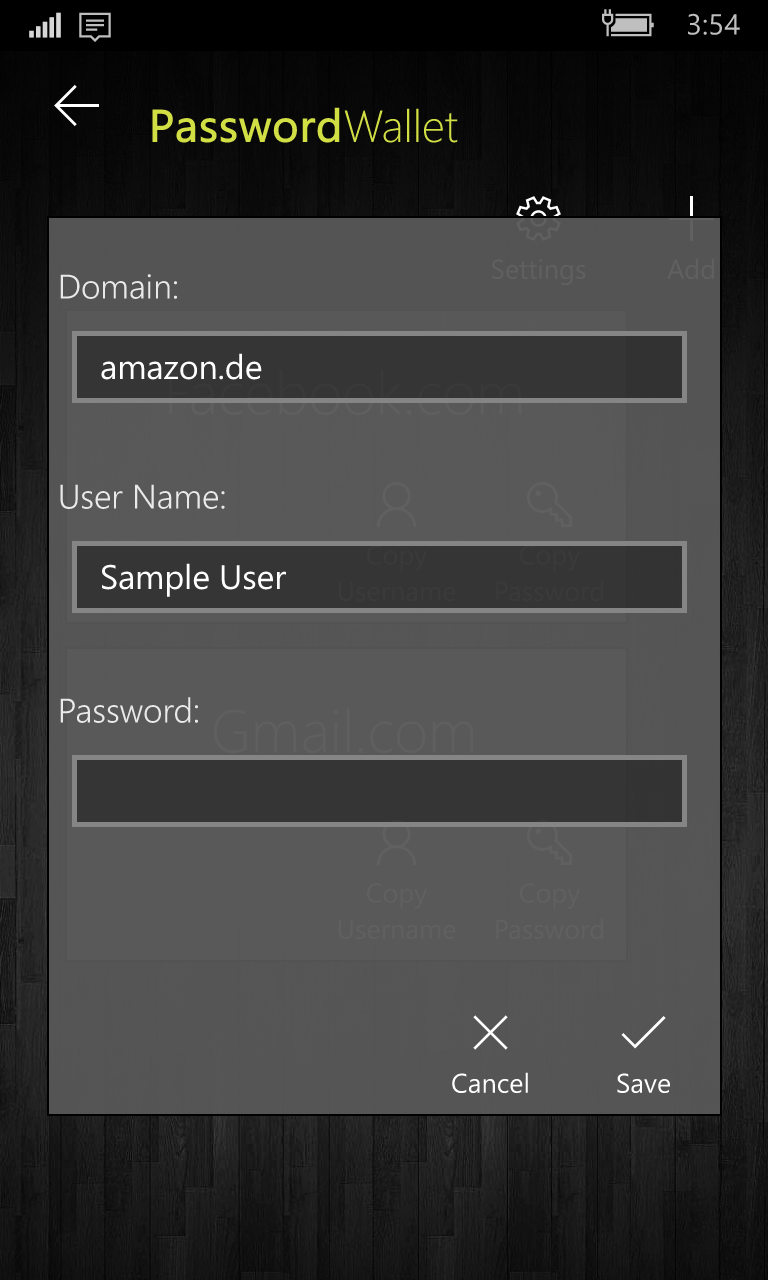

With this concept you can adapt your code to any screen sizes very easily. Here is a sample from my App, the first screen is from a Desktop PC, and the second one is from a Phone. Both of them are the same XAML Page.

And finally here you find my Hello World sample for this.

Adaptive Code

The second obvious big question is this: How can I create one binary package which contains e.g. IoT specific code (let's say controlling an analog signal) and also has phone specific code (like dialing a phone number) plus it still runs on a PC?!

Windows is not specific to this, as soon as your code is considered "cross platform" you face the same challenge. The first naive solution is ifdefs. An in fact when you shared code between Windows 8.1 and Windows Phone 8.1 something like this was very common:

#if WINDOWS_PHONE_APP

Windows.Phone.UI.Input.HardwareButtons.BackPressed

+= this.HardwareButtons_BackPressed;

#endif

Now this is ugly! It does not scale: what if we have 5 platforms? And even if we have only two platforms how to test it properly? Write 2 unit tests? One for phone one for desktop? That would be crazy! So let's agree that ifdef is not the way to go!

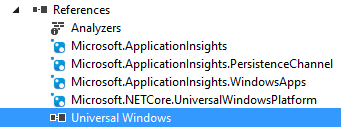

To elegantly solve this the concept of Platform Specific APIs was created. When you create a UWP app with file->new project a reference is added to the Universal Device Family. This is where all the APIs are implemented which work on every platform: think about things like DateTime, IAsyncAction and stuff like that.

In fact, according to Microsoft more than 80% of all the UWP types live here in this common part. For example, my PasswordWallet app uses only these kinds of types, so for my scenario this is really enough.

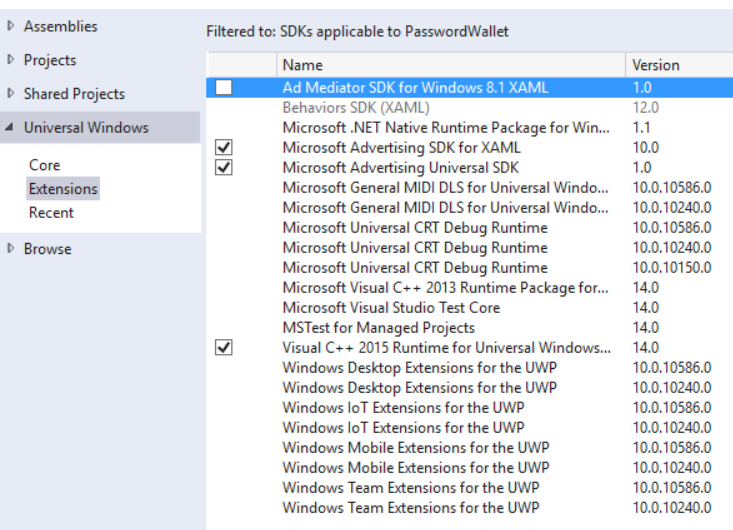

But when the times comes and you have to use some device specific types (let's say you have a Phone and want to know that the user pushes the hardware camera button, or you want to make a phone call) you can add so called Platform specific extensions. (These are normal references, just right-click to the Project and go to Add Reference)

So for our scenario we have to add the "Windows Mobile Extensions for the UWP". After that we can register for the event like this:

Windows.Phone.UI.Input.HardwareButtons.CameraPressed

+= CameraButtonPressed;

Now what happens when this is executed on a non-Mobile device? Right: it will crash. But not because we added a mobile specific reference! That itself does not invalidate the package for other device families. The problem is that the event type Windows.Phone.UI.Input.HartwerButtons.CameraPressed does not exist on non-Mobile devices.

And exactly for this there is the ApiInformation API: With that you can query what kinds of APIs are available on the current device and based on that you either execute or do not execute some specific code.

var api = "Windows.Phone.UI.Input.HardwareButtons";

if (Windows.Foundation.Metadata.ApiInformation.IsTypePresent(api))

{

Windows.Phone.UI.Input.HardwareButtons.CameraPressed

+= CameraButtonPressed;

}

Isn't this the same as ifdefs?! No! All these are runtime checks, while ifdef is a compile time construct. With this you are still able to create a single package, and you are still able to write a single unit test. If it runs on a phone then it goes into the if block, if it's not a phone it jumps over it, but the if block is still in the binary and it is absolutely valid for every device family.

Cortana integration

Of course there are many other cool things you can do with UWP. I wanted to show at least one of them, the one I picked out to show is Cortana Integration. For my own app which I used for showing adaptive UI this does not make too much sense, so I used a demo from Microsoft.

There are two options how your app can react to a voice command:

- You app can be simply started (see here)

- Your app can define a Background task, register it as an App Service and this App Service can react to the voice command without opening the foreground task (see here)

In both cases the first thing you need is a Voice Command Definition (vcd) file. This is an XML file basically describing which commands your app listens to. There is a documentation about this on msdn.

<?xml version="1.0" encoding="utf-8"?>

<VoiceCommands xmlns="http://schemas.microsoft.com/voicecommands/1.2">

<CommandSet xml:lang="en-us" Name="commandSet_en-us">

<CommandPrefix> Adventure Works, </CommandPrefix>

<Example> When is my trip to Las Vegas? </Example>

<Command Name="whenIsTripToDestination">

<Example> When is my trip to Las Vegas?</Example>

<ListenFor> when is [my] trip to {destination} </ListenFor>

<Feedback> Looking for trip to {destination} </Feedback>

<VoiceCommandService Target="AdventureWorksVoiceCommandService"/>

</Command>

<PhraseList Label="destination">

<Item> Las Vegas </Item>

<Item> Dallas </Item>

<Item> New York </Item>

</PhraseList>

</CommandSet>

<!-- Other CommandSets for other languages -->

</VoiceCommands>

There are two options to react to a voice command: either your start the application, or you activate an App Service.

In order to run the app there is a documentation here. Basically in the Command tag instead of <VoiceCommandService Target="..."> you define <Navigate/>. When you use Navigate then the app will be started and the OnActivated method will be called. In the ActivatedEventArgs the ActivationKind will be set to VoiceCommand.

protected override void OnActivated(IActivatedEventArgs args)

{

base.OnActivated(args);

if (args.Kind == ActivationKind.VoiceCommand)

{

//The App was activated by cortana

}

}

Ok, so let's go back to the VCD file above: In that code the app itself won't be activated, only it's App Service. It defines that when the user says "Adventure Works, when is my trip to Las Vegas?" then the App Service gets triggerd. For this you have to create a background task for the app and register it as an app service.

There are many more cool things regarding this, but I just wanted to show the basics of the framework. The msdn documentation for Cortana integration explains every detail of it.

Summary

I hope that the talk and the post gave an informative overview about the UWP and you saw some interesting concepts. If you want to learn more about UWP I recommend the "A Developer's guide to UWP" as the starting point. If you have any comment or question don't hesitate to contact me (either here as a comment or via twitter @gregkalapos).

Some links to start